2.1.The role of the lexical analyzer

2.1.1. Tasks.

The task of the lexical analyzer is to read the input characters from the source program and analyze them to produce a list of lexical items (vocabulary and its syntactic classification) along with some attribute information, saving them in a temporary table called the symbol table.

The lexical analyzer was designed with two ideas in mind:

a. Process all the input words of the source language and save them in a table called the symbol table.

b. Or to process each input word of the source language, the lexical analyzer is designed as a procedure called by the parser.

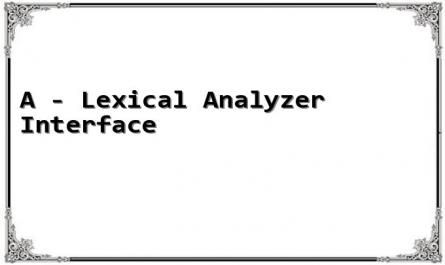

Lexical Analyzer

Table of results

Syntax analyzer

Source program

Symbol table (pre-installed)

Figure 2.1a - Interface of the lexical analyzer

The output of the lexical analyzer is a list of tokens stored in a temporary table called the token table and is the input to the parser.

In fact, the parser will call each token from the analyzer in turn to process, not call the entire token list of the source program at once.

When a request for the next token is received from the parser, the lexical analyzer reads the input character and looks it up in a pre-built representation table until it finds a token.

This interaction is shown in the following figure, where the lexical analyzer is designed as a procedure called by the parser, returning a token when called.

Source program

token

Symbol table (pre-installed)

Syntax analyzer

Lexical Analyzer

Get the next token

Figure 2.1 b- Interface of the lexical analyzer

2.1.2. Lexical analysis process

1) Remove unnecessary characters (comments, blank lines, newline symbols, unnecessary blank characters)

The translation process will consider all characters in the input line, so meaningless characters (blanks, tabs, newlines) or comments must be ignored. When the lexical analyzer ignores these whitespaces, the parser never cares about them again.

2) Identify symbols: identify word elements.

For example, concatenate digits to form a number and use it as a unit during translation. Let num be a token representing an integer. When a string of digits appears in the input stream, the parser sends num to the parser. The value of the integer is passed to the parser as an attribute of the token num.

3) Digitization of symbols: Since numbers are easier to process than strings, keywords, and names, strings are replaced by numbers, and digits are converted to actual numbers represented in the machine. Names are stored in a list of names, strings in a list of strings, and number strings in a list of constants.

Problems of the lexical analysis stage

There are several reasons to separate the lexical analysis phase from the syntactic analysis phase:

1. First, it makes the design simpler and easier to understand. For example, the parser will no longer have to deal with whitespace or comments because they are removed by the lexical analyzer.

2. The efficiency of the compiler will also be improved, thanks to some specialized processing programs that will significantly reduce the time it takes to read data from the program.

source and pool of tokens.

3. The portability of the compiler is also improved. Input character set characteristics and device differences can be isolated in the lexical analysis step. The representation of special symbols or non-standard symbols, such as the ( in Pascal, can be isolated in the lexical analyzer.

2.1.3. Lexicon, word element, pattern

When working with lexical analyzers, we will use the terms token, pattern , and lexeme with the following specific meanings:

1) Tokens are symbols that end in the grammar for a source language, such as: keywords, nominals, operators, punctuation, constants, strings, ...

- For programming languages, word elements can be classified into the following types:

+ keyword

+ names of constants, functions, variables

+ number

+ string of characters

+ operators

+ symbols.

Example 2.1 : position := initial + 10 * rate ;

We have the vocabulary position, :=, initial, +, 10, *, rate, ; In which position, initial, rate are vocabulary with the same syntactic meaning as names.

:= is assignment

+ is addition

* is multiplication

10 is a number

; is a semicolon

So in the above sentence there are 8 vocabulary words belonging to 6 word elements.

Syntactic analysis will work on tokens, not lexicons, for example, it will work on the concept of a number, not on 5 or 2; it will work on the concept of a name, not on a, b or c.

2) The lexeme of a token is a character string that represents that token.

3) A pattern is a rule that describes a set of lexical values associated with a certain token.

Some examples of how these terms are used are shown in the following table:

Token

Illustrated vocabulary | Description of the vocabulary sample | |

const | const | const |

if | if | if |

relation | <, <=, =, < >, >, >= | < or <= or = or <> or > or >= |

id | pi, count, d2 | Start with a letter followed by a letter, then a number. |

number | 3.1416, 0, 5 | Any constant |

literal | Hello | All letters between “ and “ except “ |

Maybe you are interested!

-

The Preposition “Auf” Viewed From a Cognitive Perspective Compared with Vietnamese

The Preposition “Auf” Viewed From a Cognitive Perspective Compared with Vietnamese -

Example Illustrating a Summary of an English Text

Example Illustrating a Summary of an English Text -

The relationship between travel motivation, destination image and destination choice - A case study of Binh Dinh province tourism destination - 1

The relationship between travel motivation, destination image and destination choice - A case study of Binh Dinh province tourism destination - 1 -

Emotions of a first-time mother - 3

Emotions of a first-time mother - 3 -

A Multifaceted View of Reality

A Multifaceted View of Reality

Table 2.1 - Token examples

2.1.4. Token properties

When multiple lexical patterns match a lexical value, the lexical analyzer must provide additional information for subsequent compilation steps. Therefore, for each token, the lexical analyzer includes information about the tokens in their associated attributes. Tokens influence parsing decisions; attributes influence the interpretation of tokens. A token and its attributes form a <token, tokenval> tuple .

Example 2.2 : The token and the accompanying attribute value of the position := initial + rate*10 statement are written as a sequence of tuples:

< name , pointer to position in symbol table >

< assignment , >

< name , pointer to initial in the symbol table >

< addition operator , >

< name , pointer to rate in the rate table >

< multiplication operator , >

< integer , integer value 10 >

Note that some tuples do not need attribute values, the first element is enough to identify the lexical value.

2.1.5. Vocabulary errors

Only a few errors are detected at the lexical analysis stage, because the lexical analyzer has many ways of looking at the source program. For example, the string fi is first seen in a C program with the context: fi ( a == f (x)) ... The lexical analyzer cannot tell whether this is an error in spelling the keyword if or an undeclared identifier. Since fi is a valid identifier, the lexical analyzer must

return a token and let another stage later determine the error. However, in some situations the error must be fixed for further analysis. The simplest strategy is " panic mode ": subsequent characters are removed from the remaining input string until a complete token is found. This technique sometimes causes confusion for the parsing stage, but is generally usable.

Some other troubleshooting strategies are:

1. Delete an extra character.

2. Insert a missing character.

3. Replace an incorrect character with a correct character.

4. Convert two consecutive characters.

2.2. Temporary storage of source programs

Reading each character in the source program takes a considerable amount of time, so it affects the speed of the compiler. To solve this problem, the design reads a string of characters stored in the buffer at a time. But reading like that is difficult because it is impossible to determine which string contains a complete word. And it is necessary to distinguish which string contains a complete word. There are 2 solutions as follows:

2.2.1. Buffer pair

* Structure:

- Divide the buffer into 2 halves, each half contains N characters (N = 1024, 4096, …).

- Use 2 pointers to search in buffer:

p1: (lexeme_beginning) is placed at the beginning of a lexeme.

p2: (forward): move over each character in the buffer to determine the word element.

Each time a read is made, N characters from the source program are read into each half of the buffer by a system read command. If the number of characters remaining in the source program is less than N, a special character eof is inserted into the buffer after the characters just read to signal that the source program has been read to the end.

Use two pointers to search the buffer. The character string between the two pointers is always the current lexeme. Initially, both pointers are placed at the beginning of each lexeme. The pointer p1 (lexeme_beginning) - the lexeme starting pointer - remains fixed at this position until the pointer p2 (forward) - moves through the buffer character by character to locate a token. When a lexeme for a token has been located, the pointer p1 moves up to match p2 and begins searching for a new lexeme.

E | = | M | * | C | * | * | 2 | EOF |

p1

p2

Figure 2.2 - Pair of two buffer halves

When pointer p2 reaches the boundary between the two buffers, the right half is filled with the next N characters in the source program. When pointer p2 reaches the end of the buffer, the left half is filled with N new characters and p2 is moved to the beginning of the buffer.

This buffer pair method usually works very well but the number of characters read ahead is limited and in some cases it may not recognize the token when pointer p2 has to traverse a distance larger than the buffer length. The formal algorithm for the operation of pointer p2 in the buffer:

if p2 is at the end of the first half then begin

end

Read the second half; p2 := p2 + 1;

else if p2 is at the end of the last half then begin

end

Read the first half;

Move p2 to the beginning of the buffer;

else p2 := p2 + 1

2.2.2. Guard lock

The buffer pair method requires that each time p2 moves, it checks to see if half the buffer is empty, which is inefficient because it requires two checks. To overcome this, we read only N-1 characters into each half of the buffer at a time, and the Nth character is a special character, usually eof. This reduces the check to one.

E | = | M | * | EOF | C | * | * | 2 | EOF |

p1

p2

Figure 2.3 - Eof guard lock at the end of each buffer zone

Formal algorithm for the operation of pointer p2 in the buffer: p2 := p2 + 1;

if p2↑ = eof then begin

if p2 is at the end of the first half then begin

end

Read the second half; p2 := p2 + 1;

else if p2 is at the end of the second half then begin

else

end

Read the first half;

Move p2 to the beginning of the first half;

end

/* EOF in the middle of the buffer indicates the end of the source program */ ends lexical analysis;

2.3. Token properties and identification

2.3.1. Token specification

a. Strings and languages

A string is a finite set of characters. The length of the string is the number of characters in the string. The empty string ε is a string of length 0.

A language is a set of strings. A language can consist of only an empty string, denoted by ∅ .

b. Operations on language

- Union of L and M : L ∪ M = { s | s ∈ L or s ∈ M }

- Concatenation of L and M: LM = { st | s ∈ L and t ∈ M }

- Kleen closure of L: L * = ∞ ∪ i = 0 L i

(Concatenation of 0 or more L)

- Positive closure of L: L + = ∞ ∪ i = 1 L i

(Composition of 1 or more L)

Example 2.3 : L = {A, B, ..., Z, a, b, ..., z }

D = { 0, 1, , ..., 9 }

1) L ∪ D is the set of letters and numbers.

2) LD is a set of strings consisting of one letter and one digit.

3) L4 is the set of all 4-letter strings.

4) L * is the set of all strings of letters including the empty string.

5) L( L ∪ D)* is the set of all strings starting with a letter followed by a letter or digit.

6) D + is the set of all strings consisting of one or more digits.

c. Regular Expression

In Pascal, a nominal expression is an element of the set L (L ∪ D)*. We can write: nominal expression = letter (letter | digit)* - This is a regular expression.

Regular expressions are built on a set of rules. Each regular expression r specifies a language L(r).

The following are the rules for defining regular expressions on the Alphabet set ∑.

1) ε is a regular expression specifying an empty string {ε }.

2) If a ∈ ∑ then a is a regular expression r specifying the set of strings {a}

3) Suppose r and s are regular expressions specifying the languages L(r) and L(s), we have:

a. (r) | (s) is a regular expression specifying L(r) ∪ L(s)

b. (r) (s) is a regular expression specifying L(r)L(s).

c. (r)* is a regular expression specifying (L(r))*

Convention:

The closure operator * has highest precedence and is left associative. The compound operator has second precedence and is left associative.

The union operator | has lowest precedence and is left associative.

Example 2.4 : Given ∑ = { a, b}

1) Regular expression a | b specification {a, b}

2) The regular expression (a | b) (a | b) specifies the set {aa, ab, ba, bb}. This set can be specified by the following equivalent regular expression: aa | ab | ba | bb.

3) Regular expression a* specifies { ε, a, aa, aaa, ... }

4) The regular expression (a | b)* specifies {(, a, b, aa,bb, ...}. This set can be specified by (a*b* )*.

5) Regular expression a | a* b specification {a, b, ab, aab,... }

Two regular expressions specifying the same set we say they are equivalent and write r = s.