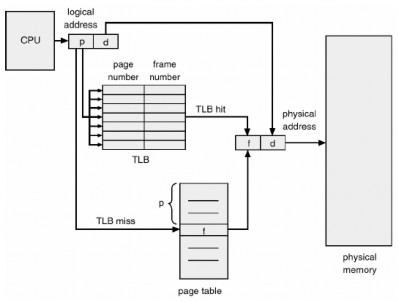

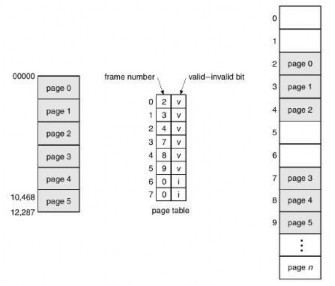

by the CPU allocates the hardware page table address when a process is allocated the CPU. Thus, pages increase context switch time.

2) Hardware support

Each operating system has its own method of storing page tables. Most allocate a page table to each process. A pointer to a page table is stored with various register values (like the instruction counter) in the process control block. When the dispatcher is asked to start a process, it must reload the user registers and define the appropriate hardware page table values from the stored user page table.

The hardware implementation of the page table can be done in several ways. In the simplest way, the page table is implemented as a set of dedicated registers. These registers should be constructed with very high-speed logic to perform efficient page address translation. Every access to memory must check the page map, so efficiency is a major consideration. The CPU dispatcher reloads these registers only when it reloads other registers. Of course, instructions to load or modify the page table registers must be authorized so that only the operating system can change the memory map. The DEC PDP-11 is an example of such an architecture. The address contains 16 bits, and the page size is 8 KB. Therefore, the page table contains 8 entries, which are kept in fast registers.

Using registers for the page table is only appropriate if the page table is small (e.g., 256 entries). However, most contemporary computers allow very large page tables (e.g., 1 million entries). For these machines, using fast registers to implement the page table is not feasible. Rather, the page table is kept in main memory, and the page-table base register (PTBR) points to the page table register. Changing the page tables requires changing only one register, substantially reducing context switching time.

Maybe you are interested!

-

![Mobile Phone Usage in Hanoi Inner City Area

zt2i3t4l5ee

zt2a3gsconsumer,consumption,consumer behavior,marketing,mobile marketing

zt2a3ge

zc2o3n4t5e6n7ts

- Test the relationship between demographic variables and consumer behavior for Mobile Marketing activities

The analysis method used is the Chi-square test (χ2), with statistical hypotheses H0 and H1 and significance level α = 0.05. In case the P index (p-value) or Sig. index in SPSS has a value less than or equal to the significance level α, the hypothesis H0 is rejected and vice versa. With this testing procedure, the study can evaluate the difference in behavioral trends between demographic groups.

CHAPTER 4

RESEARCH RESULTS

During two months, 1,100 survey questionnaires were distributed to mobile phone users in the inner city of Hanoi using various methods such as direct interviews, sending via email or using questionnaires designed on the Internet. At the end of the survey, after checking and eliminating erroneous questionnaires, the study collected 858 complete questionnaires, equivalent to a rate of about 78%. In addition, the research subjects of the thesis are only people who are using mobile phones, so people who do not use mobile phones are not within the scope of the thesis, therefore, the questionnaires with the option of not using mobile phones were excluded from the scope of analysis. The number of suitable survey questionnaires included in the statistical analysis was 835.

4.1 Demographic characteristics of the sample

The structure of the survey sample is divided and statistically analyzed according to criteria such as gender, age, occupation, education level and personal income. (Detailed statistical table in Appendix 6)

- Gender structure: Of the 835 completed questionnaires, 49.8% of respondents were male, equivalent to 416 people, and 50.2% were female, equivalent to 419 people. The survey results of the study are completely consistent with the gender ratio in the population structure of Vietnam in general and Hanoi in particular (Male/Female: 49/51).

- Age structure: 36.6% of respondents are <23 years old, equivalent to 306 people. People from 23-34 years old

accounting for the highest proportion: 44.8% equivalent to 374 people, people aged 35-45 and >45 are 70 and 85 people equivalent to 8.4% and 10.2% respectively. Looking at the results of this survey, we can see that the young people - youth account for a large proportion of the total number of people participating in the survey. Meanwhile, the middle-aged people including two age groups of 35 - 45 and >45 have a low rate of participation in the survey. This is completely consistent with the reality when Mobile Marketing is identified as a Marketing service aimed at young people (people under 35 years old).

- Structure by educational level: among 835 valid responses, 541 respondents had university degrees, accounting for the highest proportion of ~ 75%, 102 had secondary school degrees, ~ 13.1%, and 93 had post-graduate degrees, ~ 11.9%.

- Occupational structure: office workers and civil servants are the group with the highest rate of participation with 39.4%, followed by students with 36.6%. Self-employed people account for 12%, retired housewives are 7.8% and other occupational groups account for 4.2%. The survey results show that the student group has the same rate as the group aged <23 at 36.6%. This shows the accuracy of the survey data. In addition, the survey results distributed by occupational criteria have a rate almost similar to the sample division rate in chapter 3. Therefore, it can be concluded that the survey data is suitable for use in analysis activities.

- Income structure: the group with income from 3 to 5 million has the highest rate with 39% of the total number of respondents. This is consistent with the income structure of Hanoi people and corresponds to the average income of the group of civil servants and office workers. Those

People with no income account for 23%, income under 3 million VND accounts for 13% and income over 5 million VND accounts for 25%.

4.2 Mobile phone usage in Hanoi inner city area

According to the survey results, most respondents said they had used the phone for more than 1 year, specifically: 68.4% used mobile phones from 4 to 10 years, 23.2% used from 1 to 3 years, 7.8% used for more than 10 years. Those who used mobile phones for less than 1 year accounted for only a very small proportion of ~ 0.6%. (Table 4.1)

Table 4.1: Time spent using mobile phones

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Alid

<1 year

5

.6

.6

.6

1-3 years

194

23.2

23.2

23.8

4-10 years

571

68.4

68.4

92.2

>10 years

65

7.8

7.8

100.0

Total

835

100.0

100.0

The survey indexes on the time of using mobile phones of consumers in the inner city of Hanoi are very impressive for a developing country like Vietnam and also prove that Vietnamese consumers have a lot of experience using this high-tech device. Moreover, with the majority of consumers surveyed having a relatively long time of use (4-10 years), it partly proves that mobile phones have become an important and essential item in peoples daily lives.

When asked about the mobile phone network they are using, 31% of respondents said they are using the network of Vietel company, 29% use the network of

of Mobifone company, 27% use Vinaphone companys network and 13% use networks of other providers such as E-VN telecom, S-fone, Beeline, Vietnammobile. (Figure 4.1).

Figure 4.1: Mobile phone network in use

Compared with the announced market share of mobile telecommunications service providers in Vietnam (Vietel: 36%, Mobifone: 29%, Vinaphone: 28%, the remaining networks: 7%), we see that the survey results do not have many differences. However, the statistics show that there is a difference in the market share of other networks because the Hanoi market is one of the two main markets of small networks, so their market share in this area will certainly be higher than that of the whole country.

According to a report by NielsenMobile (2009) [8], the number of prepaid mobile phone subscribers in Hanoi accounts for 95% of the total number of subscribers, however, the results of this survey show that the percentage of prepaid subscribers has decreased by more than 20%, only at 70.8%. On the contrary, the number of postpaid subscribers tends to increase from 5% in 2009 to 19.2%. Those who are simultaneously using both types of subscriptions account for 10%. (Table 4.2).

Table 4.2: Types of mobile phone subscribers

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

Prepay

591

70.8

70.8

70.8

Pay later

160

19.2

19.2

89.9

Both of the above

84

10.1

10.1

100.0

Total

835

100.0

100.0

The above figures show the change in the psychology and consumption habits of Vietnamese consumers towards mobile telecommunications services, when the use of prepaid subscriptions and junk SIMs is replaced by the use of two types of subscriptions for different purposes and needs or switching to postpaid subscriptions to enjoy better customer care services.

In addition, the majority of respondents have an average spending level for mobile phone services from 100 to 300 thousand VND (406 ~ 48.6% of total respondents). The high spending level (> 500 thousand VND) is the spending level with the lowest number of people with only 8.4%, on the contrary, the low spending level (under 100 thousand VND) accounts for the second highest proportion among the groups of respondents with 25.4%. People with low spending levels mainly fall into the group of students and retirees/housewives - those who have little need to use or mainly use promotional SIM cards. (Table 4.3).

Table 4.3: Spending on mobile phone charges

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<100,000

212

25.4

25.4

25.4

100-300,000

406

48.6

48.6

74.0

300,000-500,000

147

17.6

17.6

91.6

>500,000

70

8.4

8.4

100.0

Total

835

100.0

100.0

The statistics in Table 4.3 are similar to the percentages in the NielsenMobile survey results (2009) with 73% of mobile phone users having medium spending levels and only 13% having high spending levels.

The survey results also showed that up to 31% ~ nearly one-third of respondents said they sent more than 10 SMS messages/day, meaning that on average they sent 1 SMS message for every working hour. Those with an average SMS message volume (from 3 to 10 messages/day) accounted for 51.1% and those with a low SMS message volume (less than 3 messages/day) accounted for 17%. (Table 4.4)

Table 4.4: Number of SMS messages sent per day

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<3 news

142

17.0

17.0

17.0

3-10 news

427

51.1

51.1

68.1

>10 news

266

31.9

31.9

100.0

Total

835

100.0

100.0

Similar to sending messages, those with an average message receiving rate (from 3-10 messages/day) accounted for the highest percentage of ~ 55%, followed by those with a high number of messages (over 10 messages/day) ~ 24% and those with a low number of messages received daily (under 3 messages/day) remained at the bottom with 21%. (Table 4.5)

Table 4.5: Number of SMS messages received per day

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<3 news

175

21.0

21.0

21.0

3-10 news

436

55.0

55.0

76.0

>10 news

197

24.0

24.0

100.0

Total

835

100.0

100.0

When comparing the data of the two result tables 4.4 and 4.5, we can see the reasonableness between the ratio of the number of messages sent and the number of messages received daily by the interview participants.

4.3 Current status of SMS advertising and Mobile Marketing

According to the interview results, in the 3 months from the time of the survey and before, 94% of respondents, equivalent to 785 people, said they received advertising messages, while only a very small percentage of 6% (only 50 people) did not receive advertising messages (Table 4.6).

Table 4.6: Percentage of people receiving advertising messages in the last 3 months

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

Have

785

94.0

94.0

94.0

Are not

50

6.0

6.0

100.0

Total

835

100.0

100.0

The results of Table 4.6 show that consumers in the inner city of Hanoi are very familiar with advertising messages. This result is also the basis for assessing the knowledge, experience and understanding of the respondents in the interview. This is also one of the important factors determining the accuracy of the survey results.

In addition, most respondents said they had received promotional messages, but only 24% of them had ever taken the action of registering to receive promotional messages, while 76% of the remaining respondents did not register to receive promotional messages but still received promotional messages every day. This is the first sign indicating the weaknesses and shortcomings of lax management of this activity in Vietnam. (Table 4.7)

div.maincontent .s1 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s2 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s3 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .p { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; margin:0pt; } div.maincontent p { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; margin:0pt; } div.maincontent .s4 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: 6pt; } div.maincontent .s5 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s6 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s7 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s8 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s9 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s10 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 12pt; } div.maincontent .s11 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s12 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s13 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s14 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s15 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: 6pt; } div.maincontent .s16 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 5.5pt; vertical-align: 3pt; } div.maincontent .s17 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 8.5pt; } div.maincontent .s18 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: bold; font-size: 14pt; } div.maincontent .s19 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; font-size: 14pt; } div.maincontent .s20 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s21 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s22 { color: black; font-family:Courier New, monospace; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s23 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s24 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s25 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s26 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s27 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 1.5pt; } div.maincontent .s28 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 11pt; } div.maincontent .s29 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s30 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s31 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s32 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s33 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 11pt; } div.maincontent .s35 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s36 { color: #F00; font-family:Arial, sans-serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s37 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s38 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 8.5pt; vertical-align: 5pt; } div.maincontent .s39 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s40 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 7pt; vertical-align: 4pt; } div.maincontent .s41 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s42 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 11pt; } div.maincontent .s43 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 7.5pt; vertical-align: 5pt; } div.maincontent .s44 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 7pt; vertical-align: 5pt; } div.maincontent .s45 { color: #F00; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s46 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 7pt; vertical-align: 5pt; } div.maincontent .s47 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 11pt; } div.maincontent .s48 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s49 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -2pt; } div.maincontent .s50 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; } div.maincontent .s51 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -1pt; } div.maincontent .s52 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -2pt; } div.maincontent .s53 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s54 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -1pt; } div.maincontent .s55 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s56 { color: #00F; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s57 { color: #00F; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s58 { color: #00F; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s59 { color: #00F; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s60 { color: #00F; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; font-size: 13pt; } div.maincontent .s61 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s62 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s63 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .content_head2 { color: #F00; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s64 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s67 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; } div.maincontent .s68 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 12pt; } div.maincontent .s69 { color: black; font-family:Times New Roman, serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 12pt; } div.maincontent .s70 { color: black; font-family:Times New Roman, serif; font-style: normal; font-weight: normal; tex](data:image/svg+xml,%3Csvg%20xmlns=%22http://www.w3.org/2000/svg%22%20viewBox=%220%200%2075%2075%22%3E%3C/svg%3E) Mobile Phone Usage in Hanoi Inner City Area

zt2i3t4l5ee

zt2a3gsconsumer,consumption,consumer behavior,marketing,mobile marketing

zt2a3ge

zc2o3n4t5e6n7ts

- Test the relationship between demographic variables and consumer behavior for Mobile Marketing activities

The analysis method used is the Chi-square test (χ2), with statistical hypotheses H0 and H1 and significance level α = 0.05. In case the P index (p-value) or Sig. index in SPSS has a value less than or equal to the significance level α, the hypothesis H0 is rejected and vice versa. With this testing procedure, the study can evaluate the difference in behavioral trends between demographic groups.

CHAPTER 4

RESEARCH RESULTS

During two months, 1,100 survey questionnaires were distributed to mobile phone users in the inner city of Hanoi using various methods such as direct interviews, sending via email or using questionnaires designed on the Internet. At the end of the survey, after checking and eliminating erroneous questionnaires, the study collected 858 complete questionnaires, equivalent to a rate of about 78%. In addition, the research subjects of the thesis are only people who are using mobile phones, so people who do not use mobile phones are not within the scope of the thesis, therefore, the questionnaires with the option of not using mobile phones were excluded from the scope of analysis. The number of suitable survey questionnaires included in the statistical analysis was 835.

4.1 Demographic characteristics of the sample

The structure of the survey sample is divided and statistically analyzed according to criteria such as gender, age, occupation, education level and personal income. (Detailed statistical table in Appendix 6)

- Gender structure: Of the 835 completed questionnaires, 49.8% of respondents were male, equivalent to 416 people, and 50.2% were female, equivalent to 419 people. The survey results of the study are completely consistent with the gender ratio in the population structure of Vietnam in general and Hanoi in particular (Male/Female: 49/51).

- Age structure: 36.6% of respondents are <23 years old, equivalent to 306 people. People from 23-34 years old

accounting for the highest proportion: 44.8% equivalent to 374 people, people aged 35-45 and >45 are 70 and 85 people equivalent to 8.4% and 10.2% respectively. Looking at the results of this survey, we can see that the young people - youth account for a large proportion of the total number of people participating in the survey. Meanwhile, the middle-aged people including two age groups of 35 - 45 and >45 have a low rate of participation in the survey. This is completely consistent with the reality when Mobile Marketing is identified as a Marketing service aimed at young people (people under 35 years old).

- Structure by educational level: among 835 valid responses, 541 respondents had university degrees, accounting for the highest proportion of ~ 75%, 102 had secondary school degrees, ~ 13.1%, and 93 had post-graduate degrees, ~ 11.9%.

- Occupational structure: office workers and civil servants are the group with the highest rate of participation with 39.4%, followed by students with 36.6%. Self-employed people account for 12%, retired housewives are 7.8% and other occupational groups account for 4.2%. The survey results show that the student group has the same rate as the group aged <23 at 36.6%. This shows the accuracy of the survey data. In addition, the survey results distributed by occupational criteria have a rate almost similar to the sample division rate in chapter 3. Therefore, it can be concluded that the survey data is suitable for use in analysis activities.

- Income structure: the group with income from 3 to 5 million has the highest rate with 39% of the total number of respondents. This is consistent with the income structure of Hanoi people and corresponds to the average income of the group of civil servants and office workers. Those

People with no income account for 23%, income under 3 million VND accounts for 13% and income over 5 million VND accounts for 25%.

4.2 Mobile phone usage in Hanoi inner city area

According to the survey results, most respondents said they had used the phone for more than 1 year, specifically: 68.4% used mobile phones from 4 to 10 years, 23.2% used from 1 to 3 years, 7.8% used for more than 10 years. Those who used mobile phones for less than 1 year accounted for only a very small proportion of ~ 0.6%. (Table 4.1)

Table 4.1: Time spent using mobile phones

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Alid

<1 year

5

.6

.6

.6

1-3 years

194

23.2

23.2

23.8

4-10 years

571

68.4

68.4

92.2

>10 years

65

7.8

7.8

100.0

Total

835

100.0

100.0

The survey indexes on the time of using mobile phones of consumers in the inner city of Hanoi are very impressive for a developing country like Vietnam and also prove that Vietnamese consumers have a lot of experience using this high-tech device. Moreover, with the majority of consumers surveyed having a relatively long time of use (4-10 years), it partly proves that mobile phones have become an important and essential item in people's daily lives.

When asked about the mobile phone network they are using, 31% of respondents said they are using the network of Vietel company, 29% use the network of

of Mobifone company, 27% use Vinaphone company's network and 13% use networks of other providers such as E-VN telecom, S-fone, Beeline, Vietnammobile. (Figure 4.1).

Figure 4.1: Mobile phone network in use

Compared with the announced market share of mobile telecommunications service providers in Vietnam (Vietel: 36%, Mobifone: 29%, Vinaphone: 28%, the remaining networks: 7%), we see that the survey results do not have many differences. However, the statistics show that there is a difference in the market share of other networks because the Hanoi market is one of the two main markets of small networks, so their market share in this area will certainly be higher than that of the whole country.

According to a report by NielsenMobile (2009) [8], the number of prepaid mobile phone subscribers in Hanoi accounts for 95% of the total number of subscribers, however, the results of this survey show that the percentage of prepaid subscribers has decreased by more than 20%, only at 70.8%. On the contrary, the number of postpaid subscribers tends to increase from 5% in 2009 to 19.2%. Those who are simultaneously using both types of subscriptions account for 10%. (Table 4.2).

Table 4.2: Types of mobile phone subscribers

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

Prepay

591

70.8

70.8

70.8

Pay later

160

19.2

19.2

89.9

Both of the above

84

10.1

10.1

100.0

Total

835

100.0

100.0

The above figures show the change in the psychology and consumption habits of Vietnamese consumers towards mobile telecommunications services, when the use of prepaid subscriptions and junk SIMs is replaced by the use of two types of subscriptions for different purposes and needs or switching to postpaid subscriptions to enjoy better customer care services.

In addition, the majority of respondents have an average spending level for mobile phone services from 100 to 300 thousand VND (406 ~ 48.6% of total respondents). The high spending level (> 500 thousand VND) is the spending level with the lowest number of people with only 8.4%, on the contrary, the low spending level (under 100 thousand VND) accounts for the second highest proportion among the groups of respondents with 25.4%. People with low spending levels mainly fall into the group of students and retirees/housewives - those who have little need to use or mainly use promotional SIM cards. (Table 4.3).

Table 4.3: Spending on mobile phone charges

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<100,000

212

25.4

25.4

25.4

100-300,000

406

48.6

48.6

74.0

300,000-500,000

147

17.6

17.6

91.6

>500,000

70

8.4

8.4

100.0

Total

835

100.0

100.0

The statistics in Table 4.3 are similar to the percentages in the NielsenMobile survey results (2009) with 73% of mobile phone users having medium spending levels and only 13% having high spending levels.

The survey results also showed that up to 31% ~ nearly one-third of respondents said they sent more than 10 SMS messages/day, meaning that on average they sent 1 SMS message for every working hour. Those with an average SMS message volume (from 3 to 10 messages/day) accounted for 51.1% and those with a low SMS message volume (less than 3 messages/day) accounted for 17%. (Table 4.4)

Table 4.4: Number of SMS messages sent per day

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<3 news

142

17.0

17.0

17.0

3-10 news

427

51.1

51.1

68.1

>10 news

266

31.9

31.9

100.0

Total

835

100.0

100.0

Similar to sending messages, those with an average message receiving rate (from 3-10 messages/day) accounted for the highest percentage of ~ 55%, followed by those with a high number of messages (over 10 messages/day) ~ 24% and those with a low number of messages received daily (under 3 messages/day) remained at the bottom with 21%. (Table 4.5)

Table 4.5: Number of SMS messages received per day

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<3 news

175

21.0

21.0

21.0

3-10 news

436

55.0

55.0

76.0

>10 news

197

24.0

24.0

100.0

Total

835

100.0

100.0

When comparing the data of the two result tables 4.4 and 4.5, we can see the reasonableness between the ratio of the number of messages sent and the number of messages received daily by the interview participants.

4.3 Current status of SMS advertising and Mobile Marketing

According to the interview results, in the 3 months from the time of the survey and before, 94% of respondents, equivalent to 785 people, said they received advertising messages, while only a very small percentage of 6% (only 50 people) did not receive advertising messages (Table 4.6).

Table 4.6: Percentage of people receiving advertising messages in the last 3 months

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

Have

785

94.0

94.0

94.0

Are not

50

6.0

6.0

100.0

Total

835

100.0

100.0

The results of Table 4.6 show that consumers in the inner city of Hanoi are very familiar with advertising messages. This result is also the basis for assessing the knowledge, experience and understanding of the respondents in the interview. This is also one of the important factors determining the accuracy of the survey results.

In addition, most respondents said they had received promotional messages, but only 24% of them had ever taken the action of registering to receive promotional messages, while 76% of the remaining respondents did not register to receive promotional messages but still received promotional messages every day. This is the first sign indicating the weaknesses and shortcomings of lax management of this activity in Vietnam. (Table 4.7)

div.maincontent .s1 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s2 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s3 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .p { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; margin:0pt; } div.maincontent p { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; margin:0pt; } div.maincontent .s4 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: 6pt; } div.maincontent .s5 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s6 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s7 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s8 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s9 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s10 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 12pt; } div.maincontent .s11 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s12 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s13 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s14 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s15 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: 6pt; } div.maincontent .s16 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 5.5pt; vertical-align: 3pt; } div.maincontent .s17 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 8.5pt; } div.maincontent .s18 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; font-size: 14pt; } div.maincontent .s19 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; font-size: 14pt; } div.maincontent .s20 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s21 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s22 { color: black; font-family:"Courier New", monospace; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s23 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s24 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s25 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s26 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s27 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 1.5pt; } div.maincontent .s28 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 11pt; } div.maincontent .s29 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s30 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s31 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s32 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s33 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 11pt; } div.maincontent .s35 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s36 { color: #F00; font-family:Arial, sans-serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s37 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s38 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 8.5pt; vertical-align: 5pt; } div.maincontent .s39 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s40 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 7pt; vertical-align: 4pt; } div.maincontent .s41 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s42 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 11pt; } div.maincontent .s43 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 7.5pt; vertical-align: 5pt; } div.maincontent .s44 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 7pt; vertical-align: 5pt; } div.maincontent .s45 { color: #F00; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s46 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 7pt; vertical-align: 5pt; } div.maincontent .s47 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 11pt; } div.maincontent .s48 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s49 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -2pt; } div.maincontent .s50 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; } div.maincontent .s51 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -1pt; } div.maincontent .s52 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -2pt; } div.maincontent .s53 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s54 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -1pt; } div.maincontent .s55 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s56 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s57 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s58 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s59 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s60 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 13pt; } div.maincontent .s61 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s62 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s63 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .content_head2 { color: #F00; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s64 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s67 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; } div.maincontent .s68 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 12pt; } div.maincontent .s69 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 12pt; } div.maincontent .s70 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; tex

Mobile Phone Usage in Hanoi Inner City Area

zt2i3t4l5ee

zt2a3gsconsumer,consumption,consumer behavior,marketing,mobile marketing

zt2a3ge

zc2o3n4t5e6n7ts

- Test the relationship between demographic variables and consumer behavior for Mobile Marketing activities

The analysis method used is the Chi-square test (χ2), with statistical hypotheses H0 and H1 and significance level α = 0.05. In case the P index (p-value) or Sig. index in SPSS has a value less than or equal to the significance level α, the hypothesis H0 is rejected and vice versa. With this testing procedure, the study can evaluate the difference in behavioral trends between demographic groups.

CHAPTER 4

RESEARCH RESULTS

During two months, 1,100 survey questionnaires were distributed to mobile phone users in the inner city of Hanoi using various methods such as direct interviews, sending via email or using questionnaires designed on the Internet. At the end of the survey, after checking and eliminating erroneous questionnaires, the study collected 858 complete questionnaires, equivalent to a rate of about 78%. In addition, the research subjects of the thesis are only people who are using mobile phones, so people who do not use mobile phones are not within the scope of the thesis, therefore, the questionnaires with the option of not using mobile phones were excluded from the scope of analysis. The number of suitable survey questionnaires included in the statistical analysis was 835.

4.1 Demographic characteristics of the sample

The structure of the survey sample is divided and statistically analyzed according to criteria such as gender, age, occupation, education level and personal income. (Detailed statistical table in Appendix 6)

- Gender structure: Of the 835 completed questionnaires, 49.8% of respondents were male, equivalent to 416 people, and 50.2% were female, equivalent to 419 people. The survey results of the study are completely consistent with the gender ratio in the population structure of Vietnam in general and Hanoi in particular (Male/Female: 49/51).

- Age structure: 36.6% of respondents are <23 years old, equivalent to 306 people. People from 23-34 years old

accounting for the highest proportion: 44.8% equivalent to 374 people, people aged 35-45 and >45 are 70 and 85 people equivalent to 8.4% and 10.2% respectively. Looking at the results of this survey, we can see that the young people - youth account for a large proportion of the total number of people participating in the survey. Meanwhile, the middle-aged people including two age groups of 35 - 45 and >45 have a low rate of participation in the survey. This is completely consistent with the reality when Mobile Marketing is identified as a Marketing service aimed at young people (people under 35 years old).

- Structure by educational level: among 835 valid responses, 541 respondents had university degrees, accounting for the highest proportion of ~ 75%, 102 had secondary school degrees, ~ 13.1%, and 93 had post-graduate degrees, ~ 11.9%.

- Occupational structure: office workers and civil servants are the group with the highest rate of participation with 39.4%, followed by students with 36.6%. Self-employed people account for 12%, retired housewives are 7.8% and other occupational groups account for 4.2%. The survey results show that the student group has the same rate as the group aged <23 at 36.6%. This shows the accuracy of the survey data. In addition, the survey results distributed by occupational criteria have a rate almost similar to the sample division rate in chapter 3. Therefore, it can be concluded that the survey data is suitable for use in analysis activities.

- Income structure: the group with income from 3 to 5 million has the highest rate with 39% of the total number of respondents. This is consistent with the income structure of Hanoi people and corresponds to the average income of the group of civil servants and office workers. Those

People with no income account for 23%, income under 3 million VND accounts for 13% and income over 5 million VND accounts for 25%.

4.2 Mobile phone usage in Hanoi inner city area

According to the survey results, most respondents said they had used the phone for more than 1 year, specifically: 68.4% used mobile phones from 4 to 10 years, 23.2% used from 1 to 3 years, 7.8% used for more than 10 years. Those who used mobile phones for less than 1 year accounted for only a very small proportion of ~ 0.6%. (Table 4.1)

Table 4.1: Time spent using mobile phones

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Alid

<1 year

5

.6

.6

.6

1-3 years

194

23.2

23.2

23.8

4-10 years

571

68.4

68.4

92.2

>10 years

65

7.8

7.8

100.0

Total

835

100.0

100.0

The survey indexes on the time of using mobile phones of consumers in the inner city of Hanoi are very impressive for a developing country like Vietnam and also prove that Vietnamese consumers have a lot of experience using this high-tech device. Moreover, with the majority of consumers surveyed having a relatively long time of use (4-10 years), it partly proves that mobile phones have become an important and essential item in people's daily lives.

When asked about the mobile phone network they are using, 31% of respondents said they are using the network of Vietel company, 29% use the network of

of Mobifone company, 27% use Vinaphone company's network and 13% use networks of other providers such as E-VN telecom, S-fone, Beeline, Vietnammobile. (Figure 4.1).

Figure 4.1: Mobile phone network in use

Compared with the announced market share of mobile telecommunications service providers in Vietnam (Vietel: 36%, Mobifone: 29%, Vinaphone: 28%, the remaining networks: 7%), we see that the survey results do not have many differences. However, the statistics show that there is a difference in the market share of other networks because the Hanoi market is one of the two main markets of small networks, so their market share in this area will certainly be higher than that of the whole country.

According to a report by NielsenMobile (2009) [8], the number of prepaid mobile phone subscribers in Hanoi accounts for 95% of the total number of subscribers, however, the results of this survey show that the percentage of prepaid subscribers has decreased by more than 20%, only at 70.8%. On the contrary, the number of postpaid subscribers tends to increase from 5% in 2009 to 19.2%. Those who are simultaneously using both types of subscriptions account for 10%. (Table 4.2).

Table 4.2: Types of mobile phone subscribers

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

Prepay

591

70.8

70.8

70.8

Pay later

160

19.2

19.2

89.9

Both of the above

84

10.1

10.1

100.0

Total

835

100.0

100.0

The above figures show the change in the psychology and consumption habits of Vietnamese consumers towards mobile telecommunications services, when the use of prepaid subscriptions and junk SIMs is replaced by the use of two types of subscriptions for different purposes and needs or switching to postpaid subscriptions to enjoy better customer care services.

In addition, the majority of respondents have an average spending level for mobile phone services from 100 to 300 thousand VND (406 ~ 48.6% of total respondents). The high spending level (> 500 thousand VND) is the spending level with the lowest number of people with only 8.4%, on the contrary, the low spending level (under 100 thousand VND) accounts for the second highest proportion among the groups of respondents with 25.4%. People with low spending levels mainly fall into the group of students and retirees/housewives - those who have little need to use or mainly use promotional SIM cards. (Table 4.3).

Table 4.3: Spending on mobile phone charges

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<100,000

212

25.4

25.4

25.4

100-300,000

406

48.6

48.6

74.0

300,000-500,000

147

17.6

17.6

91.6

>500,000

70

8.4

8.4

100.0

Total

835

100.0

100.0

The statistics in Table 4.3 are similar to the percentages in the NielsenMobile survey results (2009) with 73% of mobile phone users having medium spending levels and only 13% having high spending levels.

The survey results also showed that up to 31% ~ nearly one-third of respondents said they sent more than 10 SMS messages/day, meaning that on average they sent 1 SMS message for every working hour. Those with an average SMS message volume (from 3 to 10 messages/day) accounted for 51.1% and those with a low SMS message volume (less than 3 messages/day) accounted for 17%. (Table 4.4)

Table 4.4: Number of SMS messages sent per day

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<3 news

142

17.0

17.0

17.0

3-10 news

427

51.1

51.1

68.1

>10 news

266

31.9

31.9

100.0

Total

835

100.0

100.0

Similar to sending messages, those with an average message receiving rate (from 3-10 messages/day) accounted for the highest percentage of ~ 55%, followed by those with a high number of messages (over 10 messages/day) ~ 24% and those with a low number of messages received daily (under 3 messages/day) remained at the bottom with 21%. (Table 4.5)

Table 4.5: Number of SMS messages received per day

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

<3 news

175

21.0

21.0

21.0

3-10 news

436

55.0

55.0

76.0

>10 news

197

24.0

24.0

100.0

Total

835

100.0

100.0

When comparing the data of the two result tables 4.4 and 4.5, we can see the reasonableness between the ratio of the number of messages sent and the number of messages received daily by the interview participants.

4.3 Current status of SMS advertising and Mobile Marketing

According to the interview results, in the 3 months from the time of the survey and before, 94% of respondents, equivalent to 785 people, said they received advertising messages, while only a very small percentage of 6% (only 50 people) did not receive advertising messages (Table 4.6).

Table 4.6: Percentage of people receiving advertising messages in the last 3 months

Frequency

Ratio (%)

Valid Percentage

Cumulative Percentage

Valid

Have

785

94.0

94.0

94.0

Are not

50

6.0

6.0

100.0

Total

835

100.0

100.0

The results of Table 4.6 show that consumers in the inner city of Hanoi are very familiar with advertising messages. This result is also the basis for assessing the knowledge, experience and understanding of the respondents in the interview. This is also one of the important factors determining the accuracy of the survey results.

In addition, most respondents said they had received promotional messages, but only 24% of them had ever taken the action of registering to receive promotional messages, while 76% of the remaining respondents did not register to receive promotional messages but still received promotional messages every day. This is the first sign indicating the weaknesses and shortcomings of lax management of this activity in Vietnam. (Table 4.7)

div.maincontent .s1 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s2 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s3 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .p { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; margin:0pt; } div.maincontent p { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; margin:0pt; } div.maincontent .s4 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: 6pt; } div.maincontent .s5 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s6 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s7 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s8 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s9 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s10 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 12pt; } div.maincontent .s11 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s12 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s13 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s14 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s15 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: 6pt; } div.maincontent .s16 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 5.5pt; vertical-align: 3pt; } div.maincontent .s17 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 8.5pt; } div.maincontent .s18 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; font-size: 14pt; } div.maincontent .s19 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; font-size: 14pt; } div.maincontent .s20 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s21 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s22 { color: black; font-family:"Courier New", monospace; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s23 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s24 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s25 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s26 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s27 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 1.5pt; } div.maincontent .s28 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 11pt; } div.maincontent .s29 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s30 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s31 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s32 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s33 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 11pt; } div.maincontent .s35 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s36 { color: #F00; font-family:Arial, sans-serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s37 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s38 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 8.5pt; vertical-align: 5pt; } div.maincontent .s39 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s40 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 7pt; vertical-align: 4pt; } div.maincontent .s41 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s42 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 11pt; } div.maincontent .s43 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 7.5pt; vertical-align: 5pt; } div.maincontent .s44 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 7pt; vertical-align: 5pt; } div.maincontent .s45 { color: #F00; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 10.5pt; } div.maincontent .s46 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 7pt; vertical-align: 5pt; } div.maincontent .s47 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 11pt; } div.maincontent .s48 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s49 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -2pt; } div.maincontent .s50 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; } div.maincontent .s51 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -1pt; } div.maincontent .s52 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -2pt; } div.maincontent .s53 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s54 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; vertical-align: -1pt; } div.maincontent .s55 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 10.5pt; } div.maincontent .s56 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s57 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 14pt; } div.maincontent .s58 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 14pt; } div.maincontent .s59 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 13pt; } div.maincontent .s60 { color: #00F; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; font-size: 13pt; } div.maincontent .s61 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s62 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s63 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .content_head2 { color: #F00; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 14pt; } div.maincontent .s64 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: bold; text-decoration: none; font-size: 13pt; } div.maincontent .s67 { color: black; font-family:Arial, sans-serif; font-style: normal; font-weight: normal; text-decoration: none; font-size: 9.5pt; } div.maincontent .s68 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: bold; text-decoration: none; font-size: 12pt; } div.maincontent .s69 { color: black; font-family:"Times New Roman", serif; font-style: italic; font-weight: normal; text-decoration: none; font-size: 12pt; } div.maincontent .s70 { color: black; font-family:"Times New Roman", serif; font-style: normal; font-weight: normal; tex -

Car body electrical practice - 8

zt2i3t4l5ee

zt2a3gs

zt2a3ge

zc2o3n4t5e6n7ts

If the voltage is out of specification, replace the wire or connector.

If the voltage is within specification, install the front fog light relay and follow step 5.

Step 5 Check the front fog light switch

- Remove the D4 connector of the fog light switch

- Use a multimeter to measure the resistance of the front fog light switch.

Measurement location

Condition

Standard

D4-3 (BFG) -D4-4 (LFG)

Light switchFront Fog OFF

>10kΩ

D4-3 (BFG) -D4-4 (LFG)

Front fog light switchON

<1 Ω

- Standard resistor

D4 connector is located on the combination switch assembly.

If the resistance is out of specification, replace the combination switch (the fog light switch is located in the combination switch).

If the resistance is within specification, follow step 6.

Step 6 Check wiring and connectors (front fog light relay-light selector switch)

- Disconnect connector D4 of the combination switch assembly

- Use a voltmeter to measure the voltage value of jack D4 on the wire side.

Measurement location

Control modecontrol

Standard

D4-3 (BFG) - (-) AQ

TAIL

11 to 14 V

D4 connector for the wiring of the combination switch assembly

If the voltage does not meet the standard, replace the wire or connector.

If the voltage is within standard, there may have been an error in the previous measurements.

Step 7 Check the front fog lights

- Remove the front fog light electrical connector.

- Supply battery voltage to the fog lamp terminals

Jack 8, B9 of front fog lamp on the electrical side

blind first.

Power supply location

Terms and Conditions

Battery positive terminal - Terminal 2Battery negative terminal - Terminal 1

Fog lightsbefore morning

- If the light does not come on, replace the bulb.

If the light is on, re-plug the jack and continue to step 8.

Step 8 Check wiring and connectors (relay and front fog lights)

- Disconnect the B8 and B9 connectors of the front fog lights.

- Use a voltmeter to measure voltage at the following locations:

Measurement location

Switch location

Terms and Conditions

B8-2 - (-) AQ

Electric lock ON TAIL size switchFog switch ON

11 to 14 V

B9-2 - (-) AQ

Electric lock ONTAIL size switch Fog switch ON

11 to 14 V

B8 and B9 connectors on the front fog lamp wiring side

Voltage is not up to standard, repair or replace the jack. If up to standard, there may have been an error in the measurement process.

2.2.4. Procedure for removing, installing and adjusting fog lights 1. Procedure for removing

- Remove the front inner ear pads

Use a screwdriver to remove the 3 screws and remove the front part of the front inner ear liner

-Remove the fog light assembly

+ Disconnect the connector.

+ Use a screwdriver to remove 3 screws to remove the fog light cover

2. Installation sequence

-Rotate the fog lamp bulb in the direction indicated by the arrow as shown in the figure and remove the fog lamp from the fog lamp assembly.

-Rotate the fog light bulb in the direction indicated by the arrow as shown in the figure and install the light into the fog light assembly.

- Use a screwdriver to install the fog light cover

-Install the electrical connector

Attention: Be careful not to damage the plastic thread on the lamp assembly.

- Install the front inner ear pads

Use a screwdriver to install the front inner bumper with 3 screws.

3. Prepare the vehicle to adjust the fog light convergence. Prepare the vehicle:

- Make sure there is no damage or deformation to the vehicle body around the fog lights.

- Add fuel to the fuel tank

- Add oil to standard level.

- Add engine coolant to standard level.

- Inflate the tire to standard pressure.

- Place spare tire, tools and jack in original design position

- Do not leave any load in the luggage compartment.

- Let a person weighing about 75 kg sit in the driver's seat.

4. Prepare to check the fog light convergence

a/ Prepare the vehicle status as follows:

- Place the car in a dark enough place to see the lines. The lines are the dividing line, below which the light from the fog lights can be seen but above which it cannot.

- Place the car perpendicular to the wall.

- Keep a distance of 7.62 m between the center of the fog lamp and the wall.

- Park the car on level ground.

- Press the car down a few times to stabilize the suspension.

Note: A distance of approximately 7.62 m is required between the vehicle (fog lamp center) and the wall to adjust the convergence correctly. If the distance of 7.62 m cannot be achieved, set the correct distance of 3 m to check and adjust the fog lamp convergence. (Since the target area varies with the distance, please follow the instructions as shown in the figure.)

b/ Prepare a piece of thick white paper about 2 m high and 4 m wide to use as a screen.

c/ Draw a vertical line through the center of the screen (line V).

d/ Set the screen as shown in the picture. Note:

- Keep the screen perpendicular to the ground.

- Align the V line on the screen with the center of the vehicle.

e/Draw the reference lines (H, V LH and V RH lines) on the screen as shown in the figure.HINT:

Mark the center of the fog lamp on the screen. If the center mark cannot be seen on the fog lamp, use the center of the fog lamp or the manufacturer's name mark on the fog lamp as the center mark.

H line (fog light height):

Draw a line across the screen so that it passes through the center mark. Line H should be at the same height as the center mark of the fog light bulb.

Line V LH, V RH (center mark position of left fog lamp LH and right fog lamp RH):

Draw two lines so that they intersect line H at the center marks.

5. Check the fog light convergence

a/ Cover the fog lamp or remove the connector of the other side fog lamp to prevent light from the unchecked fog lamp from affecting the fog lamp convergence test.

b/ Start the engine.

c/ Turn on the fog lights and make sure that the dividing line is outside the standard area as shown in the drawing.

6. Adjust the fog light convergence

Use a screwdriver to adjust the fog light to the standard area by turning the toe adjustment screw.

Note: If the screw is adjusted too far, loosen it and then tighten it again, so that the last rotation of the light adjustment screw is clockwise.

3. Self-study questions

1. Describe the operating principle of the lighting system with automatic headlight function

2. Describe the operating principle of the lighting system with the function of rotating headlights when turning

3. Draw diagram and connect lighting system on Hyundai Porter car

4. Draw diagram and connect lighting system on Honda Accord 1992

5. Draw the lighting circuit on a 1993 Toyota Lexus

LESSON 3 MAINTENANCE AND REPAIR OF SIGNAL SYSTEM

I. IMPLEMENTATION GOAL

After completing this lesson, students will be able to:

- Distinguish between types of signals on cars

- Correctly describe common symptoms and suspected areas causing damage.

- Connecting signal circuits ensures technical requirements

- Disassemble, install, check, maintain and repair the signal system to ensure technical requirements.

- Ensure safety in work and industrial hygiene

II. LESSON CONTENT

1. General description

The signal system equipped on cars aims to create signals to notify other vehicles participating in traffic about the vehicle's operating status such as: stopping, parking, braking, reversing, turning...

Signals are used either by light such as headlamps, brake lights, turn signals….. or by sound such as horns, reverse music….

Just like the lighting system. A signal system circuit usually consists of: battery, fuse, wire, relay, electrical load and control switch. Only some switches of the signal system are on the combination switch. The switches of other signals are usually located in different locations such as in the gearbox or brake pedal……

2. Maintenance and repair

2.1. Turn signals and hazard lights

The installation location of the turn signal is shown in Figure 3.1. The turn signal control switch is located in the combination switch under the steering wheel. Turning this switch to the right or left will make the turn signal turn right or left.