e

estimated by S 2 . The difference is no longer within the range of random error.

Furthermore, in addition to the noise error, the cause of that statistical error is the additional systematic error due to the model's mismatch with the object of study.

In this case to have a compatible model the following solutions can be chosen:

+ Complicate the model by raising the level.

+ Repeat the experiment with a smaller range and variation of input parameters.

e. Construct a graph of the influence of input factors on output parameters

Based on the obtained experimental model, we can construct a graph of the influence of input parameters X on output parameters, which are the specific energy cost and productivity of the milling machine.

2.5.3. Multifactorial experiment

To use the multifactorial experimental method, the following conditions are required [8]

after:

+ The output parameters must be highly concentrated, meaning that when repeated many times

The values obtained in the same experiment do not differ too much.

+ Influencing factors must be controllable and they must be independent of each other.

+ The relationship between optimal parameters and influencing factors is expressed by equations and meets the following conditions:

- Must be a differentiable function.

- There is only one extreme in the range of varying factors.

The multifactorial experimental design method is performed according to the following steps:

- Prepare measuring instruments, experimental equipment and machinery.

- Choose the appropriate option for the experiment.

- Organize experiments.

- Processing experimental data.

Analyze and interpret the results obtained using the terminology of the respective scientific fields.

a. Select experimental planning options and establish experimental matrix

Based on the results of single-factor experiments. If the results of single-factor experiments give us a nonlinear correlation law, we can skip conducting level 1 experiments and experiments according to the level 2 planning method.

Among the experimental plans, the central composite plan is the earliest plan, but is still widely used in research today.

Total number of experiments to be performed:

In there:

N = 2k + Nα + N 0

2k - Nuclear experiments .

k- Influencing parameters, k =2 2 2 = 4 N α - Experiments at the star level.

N o - Experiments at the center

(2.23)

The variation of factors in the experimental area includes the base, upper and lower levels, these values are selected based on the analysis of single factor results.

Among the different levels of factor X i the most important is the base level X io which is determined by the formula.

X i0 = ( X imin X imax )/2 (2.24)

Finally, the range of variation of the X factors.

e X X 0 X 0 X

(2.25)

i imix ii imin

To convert from natural values to coordinate form.

x i = ( X i - X io )/ e 1

Where: x i - Code value.

X i - Actual value of the ith element.

(2.26)

In the form of the code, the lower level of each element has a value of (-1), the base level has a value of 0, and the upper level has a value of (+1).

To serve as a basis for organizing experiments and processing data later, we create a comparison table between real values and code forms for each factor (Table 2.1) and build an experimental matrix (Table 2.2) according to the principle that the experiments are completely independent.

Table 2.1. Coding of influencing factors

Factors

Level

variation

Encryption | X 1 | X 2 | |

Upper level | +1 | ||

Base level | 0 | ||

Lower level | -1 | ||

Range of variation | 0 |

Maybe you are interested!

-

“Cronbach's Alpha Cost Factor Scale”

“Cronbach's Alpha Cost Factor Scale” -

Number of Accommodation Establishments in the Country and the Central Region Through the Years 2011 - 2012

Number of Accommodation Establishments in the Country and the Central Region Through the Years 2011 - 2012 -

Absolute growth rate of experimental chickens over weeks of age

Absolute growth rate of experimental chickens over weeks of age -

Analysis of Capital Structure and Cost of Capital at Some Typical Enterprises

Analysis of Capital Structure and Cost of Capital at Some Typical Enterprises -

Defense organization and protection activities of the central coastal region under the Nguyen Dynasty in the period 1802 - 1885 - 25

Defense organization and protection activities of the central coastal region under the Nguyen Dynasty in the period 1802 - 1885 - 25

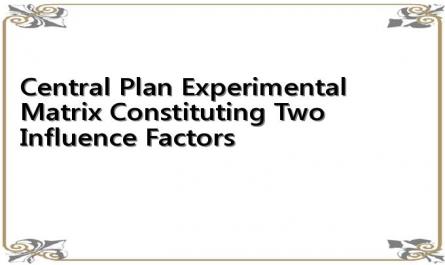

Table 2.2. Central planning experimental matrix composed of two influencing factors

STT

X 1 | X 2 | |

1 | -1 | -1 |

2 | +1 | -1 |

3 | -1 | +1 |

4 | +1 | +1 |

5 | -1 | 0 |

6 | +1 | 0 |

7

0 | +1 | |

8 | 0 | -1 |

9 | 0 | 0 |

b. Conduct the experiment

Conduct experiments according to the established matrix. After conducting experiments according to the matrix with the number of repetitions of each experiment m, we used the American multi-factor data processing program OPT, which was copyrighted and circulated at the Vietnam Agricultural Electromechanical Institute, to check.

c. Determine the mathematical model

The objective function is represented by mathematical models as a quadratic regression equation with the general form [8]:

Y = b 0

k

+ b x1 i l

k

+

i l

K

b if

i l

K

x

i

x i xf + b ij 2

i l

(2.27)

In which: k – Influencing factor. Coefficients:

X

N _ NN

b 0 = a

u 1

y - p

i 1

2

love

u 1

y u (2.28)

N

b i = e I love you

u 1

(2.29)

N

b ij = g X ui

u 1

X and Y

(2.30)

NKNN

b = c X 2you+ d X y u- P Y (2.31)

love

u 1

i l

ju

u l

u l

In the computer program, the coefficients: a, b, c, d, e, p have been calculated by determining b o , b i , b ii , b ij and the mathematical model is determined.

d. Check for homogeneity of variance

Test for homogeneity of variance using Kohren's criterion, if

If G tt < G b then assume H 0

does not contradict the experimental data. Variance

in the experiments is considered to be homogeneous. This allows to consider the noise intensity as stable when changing the parameters y in the experiment.

e. Check the significance of the regression coefficients

The regression coefficients b o , b i , b ii , b ij of the equation will be tested for significance according to the Student's test, before calculating the variance of the regression coefficients [8].

S

2

b 0

S

2

bii

= a S 2

y

y

= (c+d) S 2

(2.32)

(2.33)

S 2 = e S 2 (2.34)

ball

S

2

bij

y

S

y

= g 2

(2.35)

S

y

Here 2 is the experimental variance.

S 2 = 1

N my

2

(2.36)

N

y

m u 1

u 1

(Y - Y u )

u 1 j 1

The regression coefficient is significant when:

s 2

b0

|b 0 | > ± t

(2.37)

S 2

ball

|b i | > ± t (2.38)

S 2

bij

|b ij | > ± t (2.39)

S 2

bii

|b ii | > ± t (2.40)

t- Student standard value looks up the statistical table with significance level α= 0.05 and degrees of freedom γ= N(m-1).

If the coefficient

b i is calculated according to formula (2.28÷2.31) whose values

If the conditions (2.37 ÷ 2.40) are satisfied, they can be ignored in the regression equation.

g. Check the compatibility of the regression equation

After removing some insignificant coefficients b i from model (2.27), we get the empirical regression equation. They need to be tested by Fisher's criterion.

F tt

mS

2

S

=

2

e

(2.41)

N^

2

m u

(y u

y u )

S 2 =u 1

N k

(2.42)

N m u

2

( yes

y u )

e

S 2 =u 1 i 1

N

(2.43)

N

m u N

u 1

F b with degrees of freedom γ 1 =Nk * ; γ 2 =N(m-1) is consulted in table [8]. Where k * - Number of regression coefficients.

If

F tt < F b

then the model is compatible, if

F tt > F b

then the model does not

compatible. In this case, to have a compatible model, the following solutions can be chosen:

+ Complicate the model by raising the level.

+ Repeat the second-order experiment with a smaller variation of the input parameters.

h. Recalculate the regression coefficients

If there are some meaningless coefficients in the model, they are removed from the model. The remaining coefficients related to them need to be recalculated:

ii

+ When removing the degree of freedom b 0 , the coefficient b ii is denoted b * .

b =

ii

* b ii +

p

a b 0 (2.44)

Some of the auxiliary constants also change and need to be recalculated and then the new coefficients checked.

a * =

p * = 0 (2.45)

d * = d -

p 2 (2.46)

a

C * = c (2.47)

When removing some coefficients

b ii

1≤ m < n-1

The coefficients: b j , b j ,... do not need to be recalculated because they are not related to each other.

b ij .

Calculate only the coefficients b ij

remaining and

b 0 .

0

b * =

a * Y -

N

u

u 1

* 2

N

X

P

love

u 1

y u (2.48)

b ii =

c *

N

u 1

X ui

y + d *

n

j 1

N

X ≈ u 1

y - P *

N

y

u 1

(2.49)

In which: X y = 0 (2.50)

N

2

love

u 1

a * = ( a - m e ) P *

(2.51)

pg

C * = c; d* =

p . dp

(2.52)

After removing the meaningless coefficients and recalculating the other coefficients, it is not necessary to calculate the significance level of the new coefficients according to the correct procedure. If the model becomes incompatible, it is mandatory to use the original model.

i. Check the working ability of the regression equation

The regression model is determined to predict the value of the function y at the coordinates of interest, solving the optimization problem. The meaning of the test is to confirm whether the model really reflects the influence of factors on the indicator function or not. If the model is capable of working, the predicted value Y at a certain coordinate is accurate, with an error at least 2 times smaller than assigning to that coordinate the average value y calculated according to the entire number of experiments.

y=1 N

y =1 Ny

(2.53)

,

u

Nm u 1

ud

u 1 N u 1

If the model is considered to be capable of working (is useful) in using for forecasting the deterministic coefficient R 2 ≥0.75.

R 2 = 1-

m ( N K * ) S 2 N ( m 1) s 2

e

N

(2.54)

m ( Y u Y ) N ( m i ) S

2 2

e

e

u 1

In which: m- Number of iterations of each experiment;

K * - Number of coefficients of the regression equation;

k. Convert the regression equation to real form

To describe the influence of research parameters on the indicators of interest, it is necessary to convert the regression equation into real form with variables being natural parameters with dimensions.

Substituting formula ( 2.26) into the coded regression equation, we get

nnn

Y =a 0 +

a i x i a ij Xi Xj

(2.55)

i 1

i 1

j 1

The coefficients a 0 , a i , a ij can be determined by the coded regression coefficients:

n

a = b -

b i x 0 i

b ij x 0 i x 0 j

(2.56)

0 0

i 1

1 i

n

n

i 1 j 1

i j

j

=

a

b i -

i

b ij -

i j

2 b ij

2 0 j

X

i

i≠j (2.57)

a ij

2b

= ij

(2.58)

ij

a = 2 b il

(2.59)

il 2

i

ε- Conversion factor;

X i - Natural values of the parameters.